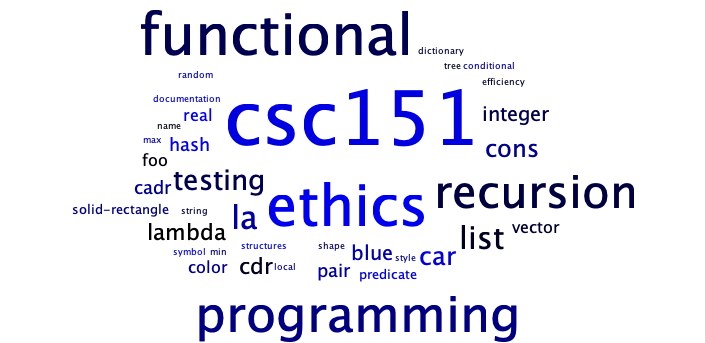

CSC 151: Functional Problem Solving

- Instructors: Peter-Michael Osera (sections 01 and 02), Leah Perlmutter (section 03)

- Class Meeting Location and Times: Noyce 3813

- Section 01: 8:30–9:50 AM CT

- Section 02: 10:00–11:20 AM CT

- Section 03: 2:30–3:50 PM CT

- Instructor Office Hours

- Peter-Michael Osera: Noyce 2811, by appointment: https://osera.cs.grinnell.edu

- Leah Perlmutter: Noyce 3811, by appointment: https://calendly.com/leahperl

- Mentors: Jacob Bell (section 01), Owen Block (section 02), Tiffany Yan (section 03)

- Mentor sessions: TBD

- Evening Tutors: Caelan Bratland, Ethan Hughes, Ishita Sarraf, Boston Gunderson, Dieu Anh Trinh, Alma Ordaz, Charles Wade, Tiffany Tang, Avaash Bhattarai

- Evening Tutor Sessions: TBD

Welcome to CSC 151! In this class, you will learn computer programming using the Scamper programming language. You do not need any prior knowledge of computer science or programming.

Acknowledgements

Materials found in this course have been adapted from prior CSC 151 offerings from many instructors over the years: Eric Autry, Charlie Curtsinger, Sarah Dahlby Albright, Janet Davis, Nicole Eikmeier, Fahmida Hamid, Priscilla Jiménez, Barbara Johnson, Titus Klinge, Peter-Michael Osera, Leah Perlmutter, Samuel A. Rebelsky, John David Stone, Anya Vostinar, Henry Walker, and Jerod Weinman.

CSC 151 (Functional Problem Solving, Fall 2024)

About

- Instructors: Peter-Michael Osera (sections 01 and 02), Leah Perlmutter (section 03)

- Class Meeting Location and Times: Noyce 3813

- Section 01: 8:30–9:50 AM CT

- Section 02: 10:00–11:20 AM CT

- Section 03: 2:30–3:50 PM CT

- Instructor Office Hours

- Peter-Michael Osera: Noyce 2811, by appointment: https://osera.cs.grinnell.edu

- Leah Perlmutter: Noyce 3811, by appointment: https://calendly.com/leahperl

- Mentors: Jacob Bell (section 01), Owen Block (section 02), Tiffany Yan (section 03)

- Mentor sessions: Sunday 6-7 pm in Noyce 3813, Thursday 8-9 pm in Noyce 3819

- Evening Tutors: Caelan Bratland, Ethan Hughes, Ishita Sarraf, Boston Gunderson, Dieu Anh Trinh, Alma Ordaz, Charles Wade, Tiffany Tang, Avaash Bhattarai

- Evening Tutor Sessions: Sundays, 3–5 PM; Sundays through Thursdays, 7–10 PM in Science 3813 and 3815

Welcome to CSC 151! In this class, you will learn computer programming using the Scamper programming language. You do not need any prior knowledge of computer science or programming.

Learning Objectives

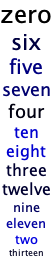

This course covers 18 learning objectives (LOs).

- Unit 1 (Weeks 1–4)

- Decomposition. Decompose a computational problem into smaller sub-problems amendable to implementation with functions.

- Procedural abstraction. Take a concrete implementation in Racket and create a procedure that generalizes that behavior.

- Tracing. Trace the execution of a Racket program using a substitutive model of computation.

- Primitive types. Express basic computations over primitive values and their associated standard library functions.

- Conditionals. Use Boolean expressions and conditional operations to produce conditional behavior.

- Testing. Test programs according to good software engineering principles.

- Unit 2 (Weeks 5–8)

- Documentation. Document programs according to good software engineering principles.

- Local bindings. Refactor redundancy and add clarity in computations with let-bindings.

- Lists. Manipulate lists with fundamental higher-order list functions.

- List recursion. Design and write recursive functions over lists.

- Numeric recursion. Design and write recursive functions over the natural numbers.

- Higher-order programming. Write procedures that take procedures as parameters and return procedures as results.

- Unit 3 (Weeks 9–13)

- Lambda-free anonymous procedures. Use section and composition to simplify computations.

- Dictionaries. Design and write functions that use dictionaries.

- Vectors. Design and write functions (potentially recursive functions) that use vectors.

- Data abstraction. Design data structures to separate interface from implementation.

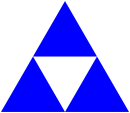

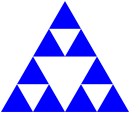

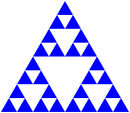

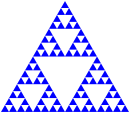

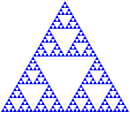

- Tree recursion. Design and write recursive functions over trees.

- Running time. Use a mental model of computation to count the relevant number of operations performed by a function.

Communication

Course Website : This website contains all courses policies. Course materials and homework deadlines will be posted here as they become available. Familiarize yourself with the website so you know where to find everything.

Email : Your instructor will send course announcements via email. You are responsible for reading all email from your instructor.

Microsoft Teams : Our class has a Teams channel for Q&A shared by all three sections. If you have a question and others in the class could benefit from its answer, please post in the teams Q&A channel.

Getting in touch with your instructor : If you need to get in touch with me privately, use email or teams. We try to reply to messages within about 24 hours, excluding weekends and holidays. If you do not hear back within that amount of time, please send a reminder.

Gradescope : You will submit assignments via gradescope. Please confirm that you have been added to this class on gradescope.

Class related meetings and help resources

Class meetings : Most days of class are lab days. Your instructor will make announcements and might briefly present concepts. Most of the time, you will collaborate with your lab team on programming practice problems.

Evening tutors : 5 days per week, peer educators will be available in our classroom during certain hours to help you with homework questions. You can drop in at any time to work on your homework or ask a question.

Mentor sessions : 3 times per week, a peer educator will hold a supplementary session where they might offer a review of concepts from class or practice problems. You should show up at the start of the session and stay until the end.

Informal time : You are encouraged to come to our classroom to study and work at any time that the room is not reserved for class or a meeting. This is an opportunity to studycollaborate with peers and form community.

Office hours : Your instructor will hold office hours each week. This time is for you to meet with your instructor and talk about any course related matter that you like!

Individual tutors : If you are taking advantage of the resources above and need more help, contact your instructor to request an individual tutor to meet with you one-on-one.

Academic advising : Grinnell offers academic coaches available to you as a resource to help you develop learning strategies and support you in your learning.

Deliverables

Reading responses : Prior to each class meeting, you will read an online reading assignment about computing concepts, work through code examples in a programming environment, and answer a few questions about the reading

Labs : During each class meeting, you will work with a partner to complete programming exercises to practice the concepts from the readings

Take-Home Assessments Homeworks : About once per week you will complete an individual programming assignment where you apply and extend concepts from readings and labs. No programming assignment on midterm exam weeks.

Final project : The final programming assignment will be more open ended and completed in teams.

Quizzes : About once per week, you will complete an individual, 15-minute quiz in class on paper (time extended according to any accommodations)

Exams : 4 times during the semester, you will complete an individual midterm exam in class on paper, lasting the entire class period (time extended according to any accommodations)

Pre-reflections : Before each take-home assessment homework and each exam, you will answer some reflection questions to help you prepare and feel confident to dive in.

Post-reflections : After each take-home assessment homework and each exam, you will answer some reflection questions to help you understand what went well and what went poorly.

Collaboration and Resources

- Outlook

- Do collaborate in your learning

- Collaborate in ways that support rather than undermining your learning

- We'll talk about this throughout the semester!

- On individual assignments

- Don't do somebody else's work for them or let them do yours

- Don't turn in the same or highly similar work

- Deliverables

- Reading responses

- Encouraged to work with others to understand the readings

- Write your own answers to the reading response questions

- You can get help from others but make sure you are able to explain your answers yourself

- Labs

- Typically completed in teams of 2

- Both partners should contribute equally to all parts of the lab

- You may ask other teams for help

- Make sure every team member can explain your team's answers

- Take-home Assessments Homeworks

- Individually completed

- As additional resources, use only what you find on the course website

- You may get help from course staff, including evening tutors, mentors and instructors

- You may not discuss the assessment or get help from peers, inside or outside the class

- Quizzes

- Completed individually in class

- No form of collaboration is permitted

- Exams

- Completed individually in class

- No form of collaboration is permitted

- Final project

- Completed in groups of 3–4

- All members should contribute equally to the project

- You may ask others for help

- Reading responses

Grading

Deliverables

- Reading responses

- Graded S/N based on whether you answered the assigned questions with a good faith effort

- S = satisfactory, N = Not satisfactory

- Labs

- Graded S/N based on whether you answered the assigned questions with a good faith effort

- S = satisfactory, N = Not satisfactory

- Take-home Assessments and the Final Project

- Graded EMRN based on correctness and following instructions

- E = Exceeds expectations

- M = Meets expectations

- R = Needs revision

- N = Not complete (did not make a good faith effort)

- Criteria for each letter grade are given on the homework instructions for each assessment.

- Graded EMRN based on correctness and following instructions

- Learning Objectives (Quizzes and Exams)

- You will demonstrate your mastery of each Learning Objecive (LO) in assessments (quizzes or exams).

- Each quiz gives you an opportunity to demonstrate mastery of one LO.

- Each exam gives you an opportunity to demonstrate mastery of every LO that has been covered in class up until the date of the exam. There will be one exam problem for each LO.

- Each LO is graded S/N

- S = Satisfactory (demonstrated mastery)

- N = Not Yet Satisfactory (did not yet demonstrate mastery)

- Once you have demonstrated mastery of a certain LO, you do not need to be assessed on that LO again. That is, you can skip exam problems about LOs you have already mastered.

Final grade

Major letter grades for the course are determined by tiers, a collection of required grades from your demonstration exercises and core exams. You will receive the grade corresponding to the tier for which you meet all of the requirements. For example, if you qualify for the A tier in one category and the C tier in another category, then you qualify for the C tier overall as you only meet the requirements for a C among all the categories.

| Tier | Take-home Assessments (8) | Core (18) | Project (1) |

|---|---|---|---|

| C | No Ns, at most 3 Rs, at least 1 Es | At least 10 Ss | R |

| B | No Ns, at most 2 Rs, at least 3 Es | At least 12 Ss | M |

| A | No Ns, at most 1 R, at least 5 Es | At least 14 Ss | E |

- D: two of the requirements of a C are met.

- F: zero or one of the requirements of a C are met.

Plus/minus grades

To earn a plus/minus grade, you must have completed one tier’s requirements and partially meet the next tier’s requirements. This will arise in two situations: C/B and B/A. For example, you may completely meet the requirements of a C and meet the requirements of a B for take-home assessments but not for core learning outcomes.

- If you have completed two of the upper tier's requirements, then you earn a minus grade for the higher tier, i.e., B- if you are between a C and B.

- If you have completed one of the upper tier's requirements, then you earn a plus grade for the lower tier, i.e., C+ if you are between a C and B.

Be aware that if you are at an A tier for one deliverable category but at a C tier for another, then you fully qualify for the C tier and partially meet the requirements of the B tier and thus would be considered for plus/minus grades in the B/C range.

Timely Work

You may miss turning in at most six timely work deliverables (reading questions, labs, pre- and post-reflections) without penalty. After the first six deliverables, your overall letter grade will lower by one-third of a letter grade (i.e., A becomes A-, B- becomes a C+, C becomes a D) for every two additional deliverables you miss. The following table summarizes this policy for concrete numbers of missed timely work deliverables through 12, although the policy extends to any number of missed assignments.

| Missed timely work | Letter adjustment |

|---|---|

| 0–6 deliverables | -0 |

| 7 deliverables | -1/3 |

| 8 deliverables | -1/3 |

| 9 deliverables | -2/3 |

| 10 deliverables | -2/3 |

| 11 deliverables | -1 |

| 12 deliverables | -1 |

Deadlines and Late Days

Check the schedule to see your assignments. Check Gradescope to see the time of day that each assignment is due. Due times are typically as follows:

Readings : 10 pm the day BEFORE it appears on the schedule

Labs : 10 pm on the next lab day (except around breaks and exams)

Take-Home Assessments : 10 pm the day it appears as "due" on the schedule

Reflections : 10 pm the day it appears on the schedule

Late Days

- Late days are a currency that you can spend to turn in assignments late.

- You start the semester with 10 late days.

- You are responsible for keeping track of your own late days.

- You may spend late days on reading responses, labs, pre-reflections, post-reflections, take-home assessments, and take-home assessment revisions.

- You may spend one late day to submit any of the named assignments up to 24 hours late.

- You may spend up to two late days on any given assignment, for a maximum lateness of 48 hours on that assignment.

Redo and Revision Opportunities

- Learning Objectives (Quizzes and Exams)

- When you earn an N on an LO, you can attempt to demonstrate mastery on that LO again on every following exam.

- The final exam allows you to redo past LOs but does not introduce new LOs, ensuring that you get a minimum of 2 tries to earn an S on every LO.

- Take-Home Assessments

- You may revise and resubmit take-home assessments after receiving feedback.

- You may submit up to 2 revision assessments in each weeklong revision period.

- Revision periods are shown in the revision form below.

- When revised work is graded, the new grade, if higher, replaces the old grade. Submitting revised work cannot lower your grade.

- To be eligible for resubmission, you must have completed the assessment homework in good faith on your first attempt, earning at least an R. Redo opportunities cannot be used as a way to skip take-home assessments and do them later.

- Resubmit an assessment by filling out the Take-Home Assessment Revision Request Form and resubmitting your work on gradescope. The form also clarifies some nuances of the revision policy.

- You may revise and resubmit take-home assessments after receiving feedback.

- Reading responses, labs, and reflections are graded based on good faith engagement with the assigned questions and cannot be revised for credit.

Attendance

We expect you to attend class every day because collaborative learning is such an integral part of the class. If you need to be absent, talk to your instructor as soon as you know. This means a week or more in advance for planned absences such as religious observances and sporting events.

Sick Policy

Please stay home when you are sick. Wait to come back to class until you truly feel well enough to learn. Each day that you will miss class due to illness, contact your instructor before class (or as soon as possible).

When coming back to class, wear a mask until there is no more than a normal concern of contagion. Protecting the people around you from your illness is a matter of professionalism and personal respect.

Late Work due to Illness and Time Away

You are responsible for checking the course website to see what is assigned while you are out and for completing all assigned work.

The late day and redo policies are designed to offer flexibility so that late work resulting from one or two minor to moderate illnesses or unforseen circumstances will not lower your grade in the class. If you find yourself needing to take lots of time away, please talk to your instructor about getting additional late days or increasing the number of late days you can spend on a given assignment.

Access Needs

To ensure that your access needs are met, I encourage individual students to approach me so we can have a discussion about your distinctive learning needs and how to meet them within the context of this course. In addition, Grinnell College makes formal accommodations for students with documented disabilities. Students with disabilities partner with the Office of Disability Resources to make academic accommodation letters available to faculty via the accommodation portal. You can reach Disability Resources staff via email at access@grinnell.edu, by phone 641-269-3089, or by stopping by their offices on the first floor of Steiner Hall.

Title IX and Pregnancy Related Conditions

Grinnell College is committed to compliance with Title IX and to supporting the academic success of pregnant and parenting students and students with pregnancy related conditions. If you are a pregnant student, have pregnancy related conditions, or are a parenting student (child under one-year needs documented medical care) who wishes to request reasonable related supportive measures from the College under Title IX, please email the Title IX Coordinator at titleix@grinnell.edu. The Title IX Coordinator will work with Disability Resources and your professors to provide reasonable supportive measures in support of your education while pregnant or as a parent under Title IX.

Course Tools (Fall 2024)

Development Tools

Communication

Core Exam Preparation

Core exams for the course are _timed, in-person, paper examinations. While you are not allowed to bring notes, the exam questions will contain references to appropriate library functions when appropriate.

Because the exams are paper-based, we encourage you to be intentional in your studying.

Programming on a computer is different from paper where you have nothing in the way of syntax highlighting and error checking.

While we are somewhat forgiving when it comes to little syntax issues, e.g., missing parentheses, you will not have time to mince over the syntax of a lambda!

Subsequently, you should be comfortable in writing down the basic constructs of our language on paper.

To prepare for the course's core exams, you should:

- Review the list of course learning outcomes for the exam found in the course syllabus.

- Review any quiz and exam questions that you missed as they will appear on the next exam!

- Take problems from previous assignments, e.g., reading problems, labs, and take-home assessments, and see if you can redo them on paper without support.

- Bring any questions that arise to the course staff!

Additionally, Professor Perlmutter's course website has examples of previous exam questions for you to review if you would like to know the format of a question for a particular learning outcome.

Assessment #1: image composition and decomposition

In the first few days of class, you have received a crash-course introduction to programming in Scheme, in particular with images. Furthermore, you also learned about algorithmic decomposition and its importance in computer programming. In this project, we'll practice these techniques further by playing around with images.

On Collaboration

Each student should submit their own responses to this project. You may not consult other students in the class as you develop your solution, but you may consult members of the course staff and tutors. If you receive help from anyone, make sure to cite them in your responses.

External and internal correctness

In this course, we're concerned about writing good code. What does that look like? Good programs have two qualities we're looking after:

- External correctness: Does the program behave correctly according to its specification?

- Internal correctness: Is the program designed well?

External correctness is observable in the sense that we can run a program and determine that its behavior is correct. In contrast, internal correctness concerns the design of our program: Is it readable? Does it follow the design guidelines outlined in the exercise write-up and otherwise adhere to good coding conventions?

External correctness is often a given---we always want to write programs that do the right thing. However, we'll find in this course that internal correctness is just as important! Computer programs are not just "consumed" by computers. Other people will read and even modify our programs. In particular, you will find that in three months (or perhaps sooner), you will feel like "another person", forgetting what you were thinking when you were designing the program. So it is important that we build habits that are conducive to writing readable code.

Playing around

As we may have discussed previously, programming is not a spectator sport (really, few things are in this world). You need to write programs to learn how to program. You often need to write programs to learn to think computationally. The labs and projects will be you primary vehicle for this sort of practice. This alone may be enough for some of you to master Racket programming. But for many people, you will need additional practice to truly master these concepts.

One way to do this is through "playing around." What we mean by this is programming for the purposes of exploring a programming language or its libraries, rather than a specific end-product. This is how many of us approach learning a new language. We may have a few starting points in our back pockets, but as we write, we are less concerned about finishing the task at hand as we are about understanding the new environment. This exploration usually involves investigating and answering questions such as "How do I do X in this language?" or "How does feature X that I don't understand compare to feature Y that I do understand?" or even "How does this language lead me to think differently about algorithm design?"

Because you are beginning programmers, your questions will likely be markedly simpler: "How can I even make a thing happen?" and "how do I type a thing?". But nevertheless, "playing around" lets you tackle some of those ideas. You might start with one our lab exercises that you developed with a peer as a starting point and then change the code in ways that are novel to you. Or you might start from scratch and try to reproduce something you have seen or written before. There is no right way to go about "play". Its the attitude that's important: one of exploration and asking and answering questions rather than focusing on the final product.

Turn-in details

For this mini project, you will create three files: spaceship.scm, freestyle.scm, and my-image-utils.scm.

The particular contents of each are detailed below.

Part the first: Rainbow spaceship

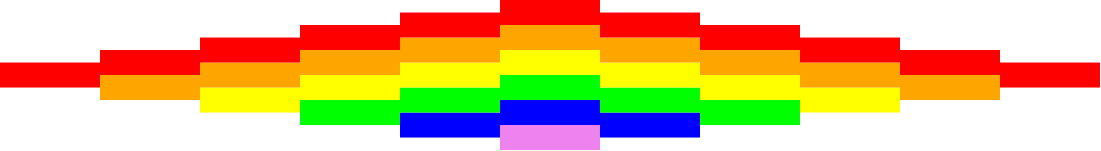

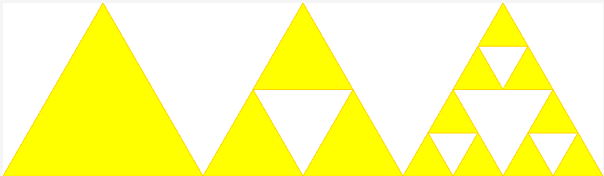

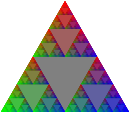

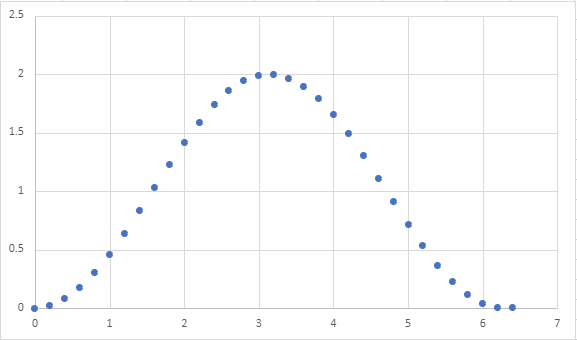

For this first part of the demo, your goal is to define an image called rainbow-spaceship that looks like this:

Here are the details of the rainbow-spaceship image:

- The spaceship composed of a collection of colored stripes, each of which are 100 pixels wide and 25 pixels tall.

- As the spaceship grows in height from left to right, a new colored stripe is added in rainbow order from top to bottom. The order of colors of the rainbow are red, orange, yellow, green, blue, and violet.

- The spaceship then shrinks in height past its center-point, losing a stripe from bottom-to-top order.

The "horizontal pyramid" effect is due to how the image library places sub-images with beside when they are different heights.

Smaller images are automatically centered vertically relative to the taller images.

For example the left-most red stripe is vertically centered relative to the red-orange two-stack of stripes next to it.

Make sure that the definition of rainbow-spaceship mimics its structure.

Also pay special attention to remove redundancy from your code using define and, as appropriate, functions.

We do not yet have the machinery to elegantly capture the growing, symmetric nature of the columns of the spaceship.

However, note the relationship between the stripes of each successive column.

How can you capture this relationship in code?

Please put your definition in the file spaceship.scm

Part the second: Freestyle

Now that you've had a taste for manipulating images and using define and functions to reduce redundancy, you will now get the opportunity to play around making images of some complexity.

As discussed, this is open-ended: we have no particular image for you to draw and only some requirements about how you design your program.

Feel free to try the following starting points:

- Take our image drawing / decomposition lab and improve on the pictures there.

- Find an image on the Internet and do you best to replicate it using the limited image functions we've discussed in the course. Keep in mind that your final image will likely be impressionistic in nature!

- Doodle! Start with a few shapes and try to build up interesting patterns from there.

To encourage you to practice algorithmic decomposition, your program must follow these design requirements:

-

Your image should contain no fewer than five smaller sub-images that you identify and codify in your program using the

definecommand. These sub-images should be independent of each other (i.e., not defined in terms of each other), but can then be combined together. -

Your image should employ at least one user-defined function that has at least one parameter that is employed in cutting down the code redundancy of your image in some way.

-

The names you

defineshould be evocative of what the image is. It should be pithy, a few words at most, but at the same time descriptive. Racket programming conventions say that these names should be in all lowercase with dashes between words, e.g.,names-like-this. -

Your program should include a

definethat is the overall image, which you should callmy-image. -

Your program should include introductory documentation, as below. (All of your Racket files should include similar documentation.)

(import image) ; freestyle.scm ; ; An amazing image of <....> I've created. ; ; CSC-151 Fall 2024 ; Homework 1, Part 2 ; Author: Stu Dent ; Date: 2024-09-31 ; Acknowledgements: ... ; (...code below here...) ; (define my-image...)

Other than this, there are no minimum requirements regarding limits, code size, or complexity. Have fun with it!

Please put your definitions in the file freestyle.scm.

Part three: Your own library functions

As you have likely noted, as your images and programs grow in complexity, it is helpful to write procedures (functions, subroutines) that encapsulate and parameterize a piece of code. For example, you may find that you regularly want to build "blocks" by overlaying an outline on a solid figure.

;;; (block size color) -> image?

;;; size : non-negative-integer?

;;; color : color?

;;; Create a square block of the specified size and color

(define block

(lambda (size color)

(overlay (square size "outline" "black")

(square size "solid" "color"))))

Write five (5) procedures that you think will be useful in building more complex images.

Create a list of five images, one build from each procedure, and call that list examples.

(define examples (list (block 20 "red") ...))

Once again, there are no minimum requirements regarding limits, code size, or complexity.

Please put our procedures and the examples list in the file my-image-utils.scm

Part four: Generalizing images

Take the image you generated in part two and turn it into a procedure, generate-my-image, with at least two parameters (e.g., color and size) so that someone can easily make variants of that image.

Provide a call to your procedure that generates the same image you used in part 2. Call it my-image-alt.

(define my-image-alt (generate-my-image ...))

Provide a call to your procedure that generates a substantially different image. Call it my-other-image.

(define my-other-image (generate-my-image ...))

All three new definitions (for generate-my-image, my-image-alt, and my-other-image) should go in the file freestyle.scm.

A note on additional complexity

You are under no obligation to use additional functions or language features beyond what we have introduced in the first week or so of the class.

However, You may feel limited by the functions we have discussed so far.

If so, you are free to reference the Scamper documentation for the image library for some of the functions available (see the "Reference" link at the top of the page). Note that that both the library and the documentation are "in process". If there's something you'd like, you might ask Prof. Osera about it.

Note that this documentation may not be entirely comprehensible to you yet! That is fine. If you choose to explore this library in more detail, we recommend experimenting with these functions in a separate file and figure out how they work before throwing them into your code. Remember, if you adapt any code from this library's documentation, you should cite that you did so in a comment in your code!

Partial rubric

In grading these assignment, we will look for the following for each level. We may also identify other characteristics that move your work between levels.

You should read through the rubric and verify that your submission meets the rubric.

Redo or above

Submissions that lack any of these characteristics will get an I.

[ ] Includes the three specified files (correctly named).

[ ] Includes an appropriate header on each file that indicates the course, author, etc.

[ ] Code runs in Scamper.

Meets expectations or above

Submissions that lack any of these characteristics will get an R or below.

[ ] In Part 1, creates the correct spaceship.

[ ] In Part 2, includes at least five sub-images.

[ ] In Part 2, includes at least one procedure.

[ ] In Part 2, the image is correctly named `my-image`.

[ ] In Part 3, includes five helper procedures.

[ ] In Part 3, each helper procedure has at least one parameter.

[ ] In Part 3, includes the required `examples` list, which has the required form.

[ ] In Part 4, the procedure has at least two parameters.

[ ] In Part 4, the procedure is correctly named `generate-my-image`.

[ ] In Part 4, there is a call to generate `my-image-alt`.

[ ] In Part 4, there is a call to generate `my-other-image`.

Exemplary / Exceeds expectations

Submissions that lack any of these characteristics will get an M or below.

[ ] In Part 1, code is concise and avoids repetition.

[ ] In Part 2, image is particularly interesting or creative.

[ ] In Part 3, one or more of the helper procedures is especially innovative.

[ ] In Part 4, `my-image-alt` appears the same as `my-image`.

[ ] In Part 4, `my-other-image` appears different from `my-image`.

[ ] In Part 4, decomposes the procedure.

MathLAN: Grinnell's GNU/Linux environment

Introduction

This course is conducted using a workshop format (a.k.a. a constructivist, collaborative, computing format); on most class days you will find yourself working on the computers in our classroom. You will quickly discover that while these computers have many similarities to the computers you have used in the past, there are also some differences. (When we started teaching this course, many students hadn't used computers at all and we had to teach things like how to use a Web browser and what the Web was. You will occasionally find comments in the readings and labs that reflect that different perspective.) In this document, we will explore some of the key issues you may need to consider as you work with the GNU/Linux computers that we prefer in computer science.

Operating systems and graphical user interfaces

A modern computer is much more than a bunch of circuitry. Most of us think of computers in terms of the operating system that they run and the graphical user interface that accompanies the operating system. Those terms may be new to you, so let us consider them briefly.

As its name suggests, an operating system (also "OS") is the system used for operating the computer. It is a large computer program that manages and simplifies most of the underlying hardware. The operating system is responsible for managing files, managing other programs, dealing with the keyboard, screen, and other peripherals, and much more.

In the old days of computing (e.g., when the more senior of the CSC 151 instructors started programming), you interacted with the operating systems almost exclusively by typing on a keyboard and seeing results on a screen (yes, we had evolved beyond punchcards). There was no mouse. To us, the operating system referred to the underlying capabilities.

These days, you interact with computers through a graphical user interface (also "GUI"). Its name is similarly clear: A GUI is an interface through which you use the computer, and it's a graphical (as opposed to textual or auditory) interface. Modern graphical user interfaces stem from work at Xerox PARC, although they were introduced to the broader consumer world through the Apple Macintosh. To most modern users, the GUI is indistinguishable from the OS. (Programmers may still find it useful to distinguish between them.)

The GNU/Linux operating system

In Grinnell's computer science department, we use an operating system called GNU/Linux. GNU/Linux is distinguished by being an Open operating system, which means that anyone who has the knowledge and desire to make modifications to the program code of the operating system is permitted to do so, and a Free operating system, which means that it doesn't have to cost you anything to install it on your computer, in contrast to the Macintosh OS, which used to have a list price of about 100. In fact, the GNU/Linux community uses "Free" in two ways, in the way we used it above (as in "Free Lemonade") and in the way we used "Open" (as in "Freedom").

Why do we use GNU/Linux rather than Macintosh OS or Windows, particularly since ITS seems to prefer Windows? One reason is that we consider GNU/Linux to be technically superior: It is less likely to crash, it is freer from viruses and other irritants, it has a much longer history of separating what the average user can do from what the administrator can do. More importantly, it is much more portable. You can sit down at any GNU/Linux computer on our network and have the same set of files naturally available. (Think about how many times you save a file on one Windows box on campus, forget to move it to your OneDrive, and then cannot access it elsewhere on campus. That will never happen on the GNU/Linux network.)

Many members of the department also have a philosophical preference for the Open Source and Free Software movements, of which GNU/Linux is an important part. We believe that good software should be free, in both senses of the word.

Xfce

GNU/Linux, unlike Macintosh OS X or Microsoft Windows, permits you to use a variety of GUIs on top of the same underlying OS. Our system administrator has chosen to use a GUI called Xfce as the default. Our experience suggests that Xfce provides an appropriate balance of power, configurability, and usability.

Xfce, like Microsoft Windows, provides a taskbar at the bottom of the screen. You will click icons on the taskbar to start applications. You may use a popup menu on the taskbar to "log out" when you are done with your work.

If you want to explore other GUIs (sometimes called Window managers), you can select your GUI when you log in to the MathLAN. Do so with caution, as some Window managers are very strange, and it may be difficult to figure out how to escape from them.

If you are interested, you can also find many ways to modify Xfce, such as moving the taskbar elsewhere.

Using GNU/Linux

So, what does this all mean for you, other than that the computer scientists at Grinnell worry about these things? It means that you will have to use an unfamiliar GUI in this course and in most future computer science courses you take. Fortunately, our configuration of Xfce is similar enough to other operating systems, particularly to Microsoft Windows, that you should find it fairly natural to use.

Like the Microsoft Windows workstations on campus, the GNU/Linux workstations require you to log in to use them. Although our GNU/Linux network once used an independent password system, it now uses the same system as the other computer systems on campus. The password system is designed so that no one, not even the MathLAN system administrator or your faculty member, will have access to your password.

Important GNU/Linux programs

In this course, you will be using a variety of programs. There are three that we consider particularly important.

- Firefox is the preferred Web browser in this course. You should be able to access Firefox through the icon in the taskbar that shows a small red animal holding a sphere.

- The terminal window supports textual interaction with the operating system. That is, it provides a kind of "textual user interface" (TUI) rather than a graphical user interface. At times, the terminal window provides the most convenient way to interact. You should be able to access the terminal window through the picture of the screen in the taskbar.

Making the most of the GNU/Linux environment

This is a class in computer science, not in using GNU/Linux. Hence, we will provide you with only the basic instructions for using GNU/Linux. It is, of course, possible to use the GNU/Linux system in more advanced ways. You may find it useful to talk to other folks who use the systems to learn particular tricks that they find valuable. We will also point out a few from time to time.

Here's one: Xfce supports multiple desktops. You can see a two-by-two grid of desktops in your taskbar, with small representations of each window. You can switch desktops by clicking on any of the four. You can also drag windows between desktops. Many people find it helpful to use separate desktops for separate tasks, such as one desktop for documentation and information and another desktop for programming. It's also useful to keep one desktop clear, so you can use it for looking at files. The corresponding lab will give you some opportunities to explore desktops.

Self checks

Self check 1: Terminology

Make a list of three important terms we used in this document and their meanings.

Self Check 2: Free and Freedom

What are the two meanings of "Free" associated with GNU/Linux? Why is each important?

Algorithm building blocks

Introduction

An algorithm is a set of instructions to accomplish a problem. As you might expect, the way we express an algorithm depends, in large part, on the audience for the algorithm. Consider the problem of making a set of chocolate chip cookies. For a beginning cook, we would have to carefully explain what it means to sift flour, the steps involved in creaming together butter and sugar, how to determine whether a cookie is done, and such. For an intermediate cook, we might not need to provide those details, but we would likely need to give an order in which ingredients are combined. For an expert cook, we might only list the ingredients and the recommended oven temperature and could assume that they would be able to figure out the rest.

Despite the wide variety of audiences and kinds of algorithms, you will find that there are a few basic "building blocks" that you will use as you construct algorithms, whether in a natural language or in a programming language. As you learn a new programming language, make sure to pay attention to how you express these blocks.

In this section, we will introduce these blocks conceptually. We'll spend the remainder of the course exploring how to realize these ideas in a computer program.

Basic building blocks (Predefined values and operations)

Even when you are writing an algorithm for a novice, you have to build upon some basic assumptions. In teaching someone to make a cookie, you can assume that they know what the different ingredients are (e.g., flour, sugar) and that they know some basic actions to do with those ingredients (e.g., stir). In teaching someone how to compute a square root, you assume that they know about numbers (including, for example, zero and one) and that they know how to work with those numbers (e.g., add, multiply). In teaching someone how to analyze a text computationally, you might assume that they know the parts of speech and, perhaps, how to parse a sentence.

Behind the scenes, computers "know" very little. But most programming languages and environments provide a rich infrastructure of values and operations on those values. We call these the predefined values and operations.

In most programming languages, you will be able to work not only with numbers, but also strings (sequences of characters), files, images, ordered collections of values, and much more. Of course, not all operations apply to all kinds of values: You would not expect to sift water or to compute the square root of a word. Hence, we often group similar kinds of values into what computer scientists refer to as a type, a collection of values and valid operations on those values.

Sequencing: ordering the operations

Most algorithms involve multiple steps. As you might expect, the order in which we perform steps can have a significant impact on the outcome of our algorithm. If we bake the ingredients of a cookie before mixing them together then we are likely to get something much less satisfactory than what we get when we mix before baking. Hence, a core aspect of most algorithms is the way we sequence the steps in the algorithm.

In some cases, you will find that you explicitly sequence the steps, telling the reader (often, the computer) to do one task, then the next, then the next. For example, you might tell a baker to shift together the dry ingredients before adding them to the liquid ingredients.

In other cases, you will express the order implicitly. For example, if you ask someone to compute 3+4x5, they should know to multiply 4x5 before adding three, even if you don't make that explicit. (Common practice suggests that on computers, we'll generally write "*" rather than "x" for the multiplication operation.)

You will discover that most programming languages have multiple ways to express both explicit and implicit ordering. Frequently, you make ordering explicit by placing the instructions in order; the computer executes them from first to last. In other cases, the ordering will be implicit, as in the computation of 3+4*5.

Variables: naming values

As you write algorithms, you will find it convenient to name things. In natural language algorithms, names are often implicit, such as "the dry ingredients" in a baking recipe. In computer languages, you will find that you more frequently need to explicitly name things, as in "let 'dry ingredients' refer to the combination of flour, baking soda, and salt". And, just as we care about the type of the built-in values, we might also specify the type of variables, using some variables to represent numbers and others to represent strings.

Computer scientists tend to refer to named values as variables, even

though they don't always vary. We try to choose descriptive names for

our variables to help the human reader of our programs understand their

purpose. For example, a reader might be momentarily confused if we used

my-name to refer to the number 5 or if we tried to multiple my-name

by 2.

Subroutines: naming helper algorithms

In writing longer algorithms, you will often find that you have to explain similar processes again and again. For example, to teach someone how to make a nut-butter and preserve sandwich, you might need to explain how to open a jar twice, once for the nut butter and once for the jar of preserves. Rather than repeating the instructions, we will find it helpful to make a separate algorithm that we can refer to in our main algorithm. For that particular example, we might have an "open jar" subroutine that we can use in our sandwich algorithm.

Computer scientists use a wide variety of names for these helper algorithms. Some just call them algorithms. Many refer to them as subroutines to emphasize that they are subordinate to the primary algorithm. In programming languages, we usually call them procedures or functions.

Like the functions you've encountered in your study of mathematics, subroutines often take inputs (e.g., a closed jar) and return a newly computed value (e.g., an open jar). We tend to refer to those inputs as parameters or arguments.

Some computer scientists are careful to distinguish between functions (whose primary purpose is to compute a value) and procedures (whose primary purpose is something other than computing a value) and between parameters (what we call the inputs to a subroutine when we define the subroutine) and arguments (what we call the inputs to a subroutine when we use the subroutine). We will be a bit more casual in our usage.

Conditionals: making decisions

We've considered four basic components of algorithms: the built-in operations, sequencing, named values, and subroutines. By themselves, those three components let you write some algorithms, but only fairly straightforward algorithms. To write more complex algorithms, you need more complex algorithm structures. One of the most important kinds of structures is the conditional, which lets you make decisions based on some condition. For example, if we are writing a program that generates sentences, even after choosing the verb, we'll have to use a different form of that verb depending on whether the subject is singular or plural. Those of a more mathematical mindset might consider the problem of computing the absolute value of a number: If the number is negative, we return negative one times the number. If the number is not negative, we just return the number.

Most conditionals take one of two forms. The most basic conditional performs an action only if a condition holds.

if <some condition> holds then

perform some actions

For example,

if your baking powder is old then

double the amount of baking powder in the recipe

More frequently, though, we use the conditional to choose between one of two options.

if <some condition> holds then

perform some actions

otherwise

perform a different set of actions

For example,

if you are at an elevation below 3000 feet then

set the oven temperature to 325 degrees Fahrenheit

otherwise

set the oven temperature to 350 degrees Fahrenheit

or

To compute the absolute value of a real number, n

if n < 0 then

return -1*n

otherwise

return n

Once in a while, you'll find that you want to decide between more than two possibilities. In those situations, we often organize those into a sequence of conditions, which we often refer to as guards.

Scheme has a variety of conditionals that we will explore once we have mastered the basics.

Repetition: repeating tasks

We've seen that subroutines provide one mechanism for repeating a series of actions. If you want to do the same thing again, but with a different input, you just call the subroutine again. However, we frequently find that we want to repeat actions a large number of times and would find it inconvenient, at best, to write the call to the subroutine again and again and again. For situations like this, most programming languages provide structures that let us concisely write algorithms that perform a task multiple times.

-

We might do work until we reach a desired state. For example, in baking, we often stir solids and liquids together until the solids are fully dissolved. And, in teaching me to make bagels, my mother taught me to knead the dough until it reaches a consistency close to that of an earlobe. Many computer scientists refer to these as while loops.

-

We might do work for a specified number of repetitions. For example, some recipes call for you to beat for 100 strokes. Many computer scientists refer to these as for loops.

-

We might do work for each element in a collection. For example, many text analysis routines process each word in a text in turn. Many computer scientists refer to these as for-each loops. Others refer to the process of doing something to each element in a collection as mapping.

You'll discover that there are multiple ways to express each kind of repetition, as well as some more general forms of repetition.

Input and output: communicating with others

Finally, we need a way for our program to communicate with the outside world. Programs may take input from a variety of places (e.g., the keyboard, a file, a network connection) and may provide output (results) somewhere else (e.g., the screen, a file, a network connection).

Some programming systems, including Scamper, let us run programs interactively, typing expressions and seeing the results. But others will explicitly prompt for input and explicitly generate output. You will find reasons to use both the "interactive computing" mode that Scamper provides and the more general mechanisms for input and output.

Self Checks

Check 1: Reflecting on basic algorithms

Pick a non-trivial algorithm, such as a recipe for making chocolate chip cookies, and identify examples from that algorithm for each of the different algorithmic building blocks. If you wrote an algorithm on the first day of class, use that algorithm.

Check 2: Conditionals, subroutines, and repetition

Suppose we want to have the computer "read" a text and decide whether it expresses a positive or negative worldview. In what ways would conditionals, subroutines, and repetition help with this task?

An abbreviated introduction to Scamper

Introduction: Algorithms and programming languages

While our main goals in this course are for you to develop your skills in "algorithmic thinking" and apply algorithmic techniques to problems in the digital humanities, you will find it equally useful to learn how to direct computers to perform these algorithms. Programming languages provide a formal notation for expressing algorithms that can be read by both humans and computers. We will use the Scheme programming language, itself a dialect of the Lisp programming language, one of the first important programming languages. More specifically, we'll use Scamper, a derivative of Scheme custom-built for CSC 151, but we'll frequently use "Scheme" and "Scamper" interchangeably throughout the course.

One thing that sets these languages apart from most other languages is a simple, but non-traditional, syntax. To tell the computer to apply a procedure (subroutine, function) to some arguments, you write an open parenthesis, the name of the procedure, the arguments separated by spaces, and a close parenthesis. For example, here's how you add 2 and 3.

(+ 2 3)

One advantage of this parenthesized notation is that it eliminates the

need for the reader or the computer to know a set of precedence rules

for operations. Consider, for example, the expression 2+3x5. Do you

add first or multiply first? Different programming languages may

interpret it differently. On the other hand, we have to explicitly

state the order, writing either (+ 2 (* 3 5)) or

(* (+ 2 3) 5), using * as the multiplication symbol.

(+ 2 (* 3 5)) (* (+ 2 3) 5)

As this example suggests, we have already started to explore both basic operations (addition and multiplication) and sequencing (through nesting) in Scheme. You should keep three points in mind when writing and reading Scheme expressions.

- Parenthesize all non-trivial expressions. Parentheses tell Scheme that you want to apply a procedure to some values.

- Do not parenthesize basic values. Since there's no procedure call involved with a basic value, we do not write parentheses.

- Write expressions in prefix order. That is, you write the procedure

(function, operation, subroutine) before the arguments, even if it's

something like

+that you would normally put between arguments. - Sequence computations by nesting. If you have intermediate computations that you need to do, you can parenthesize them and put them within another expression.

Beyond numeric expressions

Of course, you can use Scheme for more than arithmetic computations. Here are some examples of computations with involve text.

We can find the length of a string.

(string-length "Jabberwocky")

We can break a string apart into a list of strings.

(string-split "Twas brillig and the slithy toves" " ")

We can find out how many words there are once we've split it apart.

(length (string-split "Twas brillig and the slithy toves" " "))

This operation returned a list, an ordered collection of values.

In line with the parenthesized nature of the language, list values

have the form (list ...) where the values of the list appear,

in order, separated by spaces.

Once we have a list of words, we can find out how long each word is.

(map string-length

(string-split "Twas brillig and the slithy toves" " "))

Computing with images

You've already seen a few of Scheme's basic types. Scamper supports

numbers, strings (text), and lists of values. Of course, these are

not the only types it supports. Some additional types are available

through separate libraries. For example, it is comparatively

straightforward to get Scheme to draw simple shapes if you

add (import image) to the top of your program.

(import image) (circle 60 "outline" "blue") (circle 40 "solid" "red")

We can also combine shapes by putting them above or beside each other.

(import image)

(above (circle 40 "outline" "blue")

(circle 60 "outline" "red"))

(beside (circle 40 "solid" "blue")

(circle 40 "outline" "blue"))

(above (rectangle 60 40 "solid" "red")

(beside (rectangle 60 40 "solid" "blue")

(rectangle 60 40 "solid" "black")))

As you may have discovered in your youth, there are a wide variety of interesting images we can make by just combining simple colored shapes. You'll have an opportunity to do so in the corresponding lab.

"Scheme" versus "Racket" versus "Scamper"

You may hear about another programming language, Racket, from peers who have taken prior versions of CSC 151 or from the various course readings and labs. Racket is a dialect of Scheme. That is, it is a language derived from Scheme that shares many of the same language constructs and libraries, but also improves on the language in various ways.

In the past, CSC 151 has used Racket as it is a modern, full-featured take on Scheme. However, in order to support the themes of the course, we developed our own implementation of Scheme, Scamper. In many ways, Scamper draws on modern Racket-isms, but it isn't truly a descendent of Racket as it tries to retain the simplicity of Scheme and thus doesn't adhere precisely to Racket's language standard.

For our intents and purposes as beginning programmers, Scheme, Racket, and Scamper, are all interchangeable names describing the same "functional language with parentheses" that we use in this course. So don't fret too much if you hear a different name from a peer or see a different name in a reading! However, if want to be precise, we are using:

A dialect of Scheme, custom-built for CSC 151, called Scamper.

Self Checks

Check 1: Reflect on procedures (‡)

Make a list of five or so procedures you've encountered in this reading, the number of parameters, the types of those parameters (e.g., do they require numbers or strings), and their behavior.

For example,

The

lengthprocedure takes one parameter, which must be a list, and returns the number of elements in the list.

Check 2: Some other examples (‡)

Predict the output for each of the following expressions. Be prepared to discuss them in class. Do not try them on your own.

(* (+ 4 2) 2)

(- 1 (/ 1 2))

(string-length "Snicker snack")

(string-split "Snicker snack" "ck")

(circle 10 'solid "teal")

Check 3: Precedence (‡)

Consider the expression 3 - 4 × 5 - 6{:.language-text}.

If we did not have rules for order of evaluation, one possible way to evaluate the expression would be to subtract six from five (giving us negative one), then subtract four from three (giving us negative one), and then multiply those two numbers together (giving us one). We'd express that in Scheme as

(* (- 3 4) (- 5 6))

{:type="a"}

-

What is the "official" way to evaluate that expression?

-

How would you express that in Scheme?

-

Come up with at least two other orders in which to evaluate that expression.

-

Express those other two orders in Scheme.

Getting started with GNU/Linux

Introduction

This lab gives you the opportunity to explore (or at least configure):

- Logging In

- The Xfce window environment

- Firefox

- Working with multiple desktops

- Finishing up and logging out

Please don't be intimidated! Although this lab contains many details which may seem overwhelming at first, these mechanics will become familiar rather quickly. Feel free to talk to the instructor or with a CS tutor if you have questions or want additional help!

Logging in

Short version

- On the computer in front of you, you should see a small window that asks you to log in. If you don't see such a window, try hitting a key on the keyboard or clicking the power button on the monitor.

- Enter your user name. Press the Enter key.

- Enter your password (which won't appear on the screen). Press the Enter key.

- Get help if those previous two steps don't work.

Detailed version

To use any of the computers on Grinnell's GNU/Linux network, one must log in, identifying oneself by giving a user name and a password. MathLAN workstations are configured to use the same username and password as other Grinnell services. If you do not know your Grinnell username or password please tell the instructor soon; we will need to contact ITS to reset your account information.

When a GNU/Linux workstation is not in use, it will display a login screen with a space into which one can type one's user name and, later, one's password. (If the workstation's monitor is dark, move the mouse a bit and the login screen will appear.) This window belongs to xdm, the Xwindows Display Manager. Now, move the pointer onto any part of the box containing the login box. Type in your user name, in lower-case letters, and press the Enter key. The login screen will be redrawn to acknowledge your user name and to ask for your password; type it into the space provided and press Enter. (Because no one else should see your password, it is not displayed on screen as you type it.)

At this point, a computer program that is running on the workstation contacts the College's authentication server to validate the user name and password. If it does not find the particular combination that you have supplied, it prints a brief message saying that the attempt to log in was unsuccessful and then returns to the login screen -- inviting you to try again. Consult the instructor or the system administrator if your attempts to log in are still unsuccessful. (Make sure that you don't have any spaces before your username; that's one of the most common errors.)

The Xfce window environment

Short version

- You'll see something that looks somewhat like Microsoft Windows, but also somewhat different.

- Icons at the bottom of the screen can be used to start programs.

Detailed version

Once you have logged in, a panel will appear at the bottom of the screen. Some other windows also may be visible in other parts of your screen. All of these areas are managed by a special program, called a window manager. On our network, login chores and other administrivia are handled by a program or operating system, called GNU/Linux, and the primary user interaction is handled by a window manager (GUI), called Xfce.

Firefox

Short version

- Start Firefox by clicking on the picture of the small red creature grasping a blue sphere. If Firefox doesn't work, feel free to use Chrome.

- Agree to any dialog boxes that appear. They shouldn't appear again. You also may not see any dialog boxes.

- Navigate to the course website, available at <{{ site.url }}>.

Detailed version

While some materials for this course will be available in paper, almost everything for this course (including electronic versions of paper materials) will be available on the internet. To use Firefox to view materials, such as this course's syllabus and this lab, you may follow these steps:

Click the Firefox icon on the panel, next to the Applications menu. The Firefox icon is a small red creature (presumably a fox) holding a bluish sphere.

If you do not see the Firefox icon, then move the pointer onto the Applications Menu icon at the bottom left of the panel (in Xfce, it looks like a creature on a blue X) and click once with the left mouse button. The applications menu will pop up. Move the mouse over Internet, then Firefox. Click the left mouse button once to launch Firefox.

Some students have discovered that Firefox doesn't launch. If that

happens, it may be that the launcher references the wrong version

of Firefox. Ask one of the class staff to change the launcher

from firefox-esr %u to just firefox. Or just do it yourself.

The materials for this course will walk you through using the Firefox web browser, but a version of Google Chrome is available on MathLAN workstations as well in the Applications menu under the Internet section. You can add this browser your panel if you prefer. (You can add something to your panel by dragging it from the menu to the panel.)

The first time you run Firefox on our network, two message boxes might appear.

- One box might ask you to consent to the terms of a licensing agreement.

- One box might request permission to create some configuration files in your home directory.

You should approve of any requests by clicking on the appropriate word. The pop-up boxes then disappear; you should not see them on subsequent uses of Firefox. It's also okay if the boxes don't appear; Firefox's behavior keeps changing.

Initially, Firefox displays a document containing some default information. You should navigate to the course website at <{{ site.url }}>.

We expect that most of you are already familiar with a Web browser. If not, please consult with one of us or with one of your colleagues.

Firefox options

Short version

- Click on the menu icon (a set of three lines) in the upper-right-hand corner of the screen.

- Click on the Settings menu.

- Click on Home.

- Next to "Homepage and new windows", click on Firefox Home (Default).

- Select Custom URLs....

- Update your home page to something reasonable like this course's website at or the Grinnell Office365 page.

- Quit and restart Firefox to verify that your new home page appears. If you see something other than your home page (e.g., the Grinnell College home page), then ask for help.

Detailed version

Each GNU/Linux user can configure Firefox to reflect her, his, zir, or their own preferences. Between logins, these preferences are stored in a file in the user's home directory; when Firefox is started during a later session, they are reinstated from that file.

Every user of Firefox in this class should establish a base page, a starting point for browsing. Here are the Uniform Resource Locators or URLs of some good choices:

- The main page for this course: <{{ site.url }}>

- The computer science department website: https://www.cs.grinnell.edu

- Grinnell College's main page: https://www.grinnell.edu

- Grinnell's Office365 website: https://office365.grinnell.edu

- GrinnellShare: https://grinco.sharepoint.com

- A page you create.

To establish your base page within Firefox, bring up the primary Firefox menu from the menu bar by clicking on the icon with three lines in the upper-right-hand corner of the window. Then select the Settings operation. You should then see a new screen that permits you to configure many aspects of Firefox. Click the Home button on the left. In the home settings screen, you should see a section labeled New Windows and Tabs, the first entry of which is "Homepage and new windows". Click on Firefox Home (Default). Select Custom URLs.... Paste in one of the recommended URLs. (This does not have to be a permanent change; you can change your mind about this configuration at any time within Firefox.)

To erase the current contents of the Home Page Location(s) box, move the mouse pointer to the left of the first character in the box, press the left mouse button and hold it down, and drag the mouse pointer rightwards until the entire URL is displayed in reverse video, white letters on a black background. Then release the left mouse button and type the new URL; the old one will vanish as soon as you start typing. Once you have entered the new URL, move the mouse pointer onto the button marked OK at the bottom of the pop-up window and click on it with the left mouse button.

You can, of course, simply navigate to the page you want to use as your home page and then click on Use Current Pages.

You may note that the button says "Pages" (plural) rather than "Page" (singular). Since Firefox permits tabbed browsing (that is, you can have "tabs" within the same window that you switch between), you can have a home set of tabs. Particularly obsessive people might want to set up a sequence of tabs with say, links to labs, readings, and beyond.

Note that some folks have a default launcher for Firefox that is configured to start the web browser on a specific page, regardless of the home page you choose. If you don't see your new home page when you restart Firefox, then ask for help.

Working with multiple desktops

If you've kept all those windows open, you'll notice your screen is getting a bit crowded. Fortunately, a tool called the workspace switcher lets you uncrowd your windows by moving them among multiple desktops.

Short version

- Find the workspace switcher icon in the workspace toolbar.

- Click on the switcher to move to a different desktop.

- Drag windows within the switcher to move them to other desktops.

Detailed version

In the toolbar at the bottom of the screen, you should see an icon that looks like a box containing four smaller boxes. (If you don't see it, ask for help.) This is the workspace switcher, a tool that lets you keep your application windows on several different desktops or workspaces.

The upper-left-hand box represents the desktop you are working on right now. It contains a number of still smaller boxes of varying shapes and sizes, which represent the windows you have open. When you move or resize the a window on the desktop, you should see the window's representation in the switcher move as well. Give it a try by wiggling one of your windows around.

Now, click in one of the other three boxes. You should see a new, blank desktop with no windows on it. Where did they go? If you look at the switcher, you'll see they are still in the desktop you started on. Switch back to that desktop.

You can also use the switcher to move windows from one desktop to another. Find the switcher again and identify the box that corresponds to your Firefox window. Click that box and drag it a little ways to the right, onto the next desktop. The window should disappear from the first desktop. If you click onto the desktop to the right, you should see it there.

In this class, you'll usually need to work with multiple windows: The DrRacket window for your programs, a terminal window or two, and a Web browser to read the laboratory exercises and reference materials. As you get settled in over the next few weeks, consider how you might use the switcher to help you organize your workspace efficiently.

By default, the workspace switcher presents four workspaces arranged in two rows of two each. If you want to change this configuration, right-click on the workspace switcher and select Properties to change the number of rows. Clicking on Workspace settings... will let you change the number of workspaces.

Caution: If you use the mouse scroll wheel while your cursor is over the empty desktop area, you will switch workspaces. This sometimes alarms students as it appears to have closed all of your running applications. You can switch back to the correct workspace by clicking in the workspace switcher. If this bothers you, you may want to reduce the number of workspaces to just one.

Finishing up and logging out

If you've successfully logged in, started Firefox, selected your home page, tried DrRacket, configured your account, and played with multiple desktops, you've completed the lab and you can finally stop.

Short version

- To log out, click on the icon at the lower right corner of the screen, and select Log Out.

- Do not turn off the monitor or computers.

Detailed version

When you are done using a workstation, you must log out in order to allow other people to use it. To log out, move the pointer onto your username, at the lower right corner of the screen, and click the left mouse button. A menu will pop up giving several options. Move the pointer onto the words Log Out at the bottom of the menu and click the left mouse button. A confirmation dialog will appear, giving you 30 seconds to change your mind. Click the Log out to log out immediately. The Xfce window manager vanishes, and after a few seconds the login screen reappears; this confirms that you're really logged out.

Please do not turn off the workstation when you are finished. The GNU/Linux workstations are designed to operate continuously; turning them off and on frequently actually shortens their life expectancy. Modern computers use very little power when they are sitting idle, so this is not a significant waste of resources.

An introduction to Scamper

In this laboratory, you will begin to type Scamper expressions. Scamper is the language in which we will express many of our algorithms this semester. Scamper is a particular implementation of Scheme (which varies a bit from the Scheme standard).

Introduction

Many of the fundamental ideas of computer science are best learned by reading, writing, and executing small computer programs that illustrate those ideas. One of our most important tools for this course, therefore, is a program-development environment, a computer program designed specifically to make it easier to read, write, and execute other computer programs. The Scamper language that we use in the course is also an in-browser integrated development environment (IDE for short). Scamper is a dialect of a language called Scheme, which is itself a dialect of a language called Lisp. Although Scamper is a dialect of Scheme, we will often refer to our language of choice as either "Scamper" or "Scheme." (And you may hear us also mistakenly refer to the language as "Racket" which is a more full-featured dialect of Scheme that was used in previous version of the course!)

In this lab, we explore the Scamper language and its associated program development environment.

Preparation

Scamper is available online and works on any machine with access to a modern web browser (i.e., Chrome, Firefox, Safari, or Edge):

Unlike many other web applications you have encountered, Scamper exists as a pure front-end web application. This means that your files are saved locally in your browser rather than on a server. The corollary to this fact is that if you move between web browsers or machines, you will need to manually shuffle your programs around!

Right now, this isn't an issue, but later in the course once we have writte a number of programs, we'll talk about backing up and transferring your work between machines.

Exercises

For many of our in-class lab activities, you will work with a partner. To help you manage the work between yourself and your partner, the lab instructions will specify how you should work with your partner for each exercise.

-

When working collaboratively, make sure to actively engage your partner. Ask questions, share thoughts, and come to a common consensus on the problem. We will have more to share about productive collaborative work in future labs. For now, keep in mind the golden rules of collaborative learning:

Create an environment where your partner feels comfortable sharing, failing, and ultimately learning. You will find that you will also learn better in this environment, even if you think you know the answers already!

The technique we use in this class is a variant of "pair programming", a commonly used technique for programming and for learning computer science. In pair programming, we designate the person at the keyboard as the "driver" and the person working with them as the "navigator". As in most driver/navigator situations, both driver and navigator play important roles. You should designate one of the two of you "A" and one of you as "B". Each problem will designate whether A or B drives.

You should plan to spend about five minutes on each exercise, perhaps a little less. If you begin to exceed this estimated time for an exercise, grab your instructor or class mentor and ask for help.

In this lab, you will work in a Scamper source file that you create and save called shapes.scm.

When turning in your work for this lab, you only need to turn in one copy of this file to Gradescope.

However, you should make sure to include both your name and your partner's name in the Gradescope submission!

Exercise 1: Writing Scamper code

Driver: A

In Scamper, programs exist as text written in a source file, i.e., a program. The Scamper IDE let's you create and manage source files entirely within the web browser. As we shall see by the end of this lab, these files are separate from the files on your computer and must be downloaded to be submitted to Gradescope, sent to your partner, etc.

- Navigate to the Scamper IDE: https://scamper.cs.grinnell.edu

- Click on "Create a new program" to create a new source file and name it

shapes.scm..scmis the filename extension traditionally associated with Scheme program files.

This will open up the Scamper editor pointed at your new file shapes.scm.

Next, let's write a few examples in our new source file.

Type each of the following code snippets, called expressions, into shapes.scm.

After you type each expression, run your program by clicking on the run button (▶) in the toolbar.

See if you get the same values as us!

(Note: make sure to type these snippets rather than copy-pasting them in. It is important to get a programming language, literally speaking, in you fingertips rather than just in your head!)

(sqrt 144) (+ 3 4) (+ 3 (* 4 5)) (* (+ 3 4) 5) (string-append "Hello" " " "World!") (string-split "Twas brillig and the slithy toves" " ") (length (string-split "Twas brillig and the slithy toves" " "))

Of course, one should not just thoughtlessly type expressions and see what value they get. Particularly as you learn Scamper, it is worthwhile to think a bit about the expressions and the values you expect. The self-check in the reading asked you to predict some values. Determine whether your prediction matches what Scamper computes.

(* (+ 4 2) 2)

(- 1 (/ 1 2))

(string-length "Snicker snack")

(string-split "Snicker snack" "ck")

(circle 50 "solid" "teal")

If you get an unexpected error message in one or more cases, that may be part of the intent of this exercise! Feel free to go on to the next exercise, but if you are confused by any of the output that you get, ask the instructor!

Exercise 2: Libraries

Driver: A

As you may have noted, you get an error when you try to make a circle.

(circle 50 "solid" "teal")

Why do you get an error?

Because the circle procedure is not immediately available in Scheme!

Some library functions are automatically included in every program.

The set of these functions is typically called the language's prelude or standard library.